|

I also added anisotropic scaling as an alternative to using the rotation and scale information, so that if you are scaling something like a graph which has a different X and Y scale, you can dynamically change both scales by simultaneously stretching in horizontal and vertical directions.Hopefully all newer phones will have a true 2D touch sensor.) (In spite of misinformation on the Web, there is also no firmware or software update that can fix this problem, it is a hardware limitation. keep them on a leading or a trailing diagonal), or to disallow rotation on these devices. There is no way around this other than to keep the two fingers in the same two relative quadrants (i.e. The quirky behavior results from "axis snapping" when the two points get close together in X or Y, and "ordinate confusion" where (x1,y1) and (x2,y2) get confused for (x1,y2) and (x2,y1). NOTE: rotation is quirky on older touchscreen devices that use a Synaptics or Synaptics-like "2x1D" sensor (G1, MyTouch, Droid, Nexus One) and not a true 2D sensor like the HTC Incredible or HTC EVO 4G. In fact all of rotate, scale and translate can be simultaneously adjusted based on relative movements of the first two touch points. The controller was recently updated to support pinch-rotate, allowing you to physically twist objects using two touch points on the screen.Compare pinch-zoom in Google Maps to Fractoid (in Market) to see what I mean - Fractoid uses this multitouch controller code. This is the only natural way to implement pinch-zoom, and subconsciously feels much more natural than scaling about the center of the screen. This also means that you can do a combined pinch-drag operation that will simultaneously translate and scale an object. It correctly centers the pinch operation about the center of the pinch (not about the center of the screen, as with most of the "Google Experience" apps that added their own pinch-zoom capability in Android-2.x). The controller also supports pinch-zoom, including tracking the transformation between screen coordinates and object coordinates.This MultiTouch Controller class simplifies getting access to these events for applications that just want event positions and up/down status. All this means there are a lot of API quirks you have to be aware of. It simplifies the somewhat messy and inconsistent MotionEvent touch point API - this API has grown from handling single touch points, potentially with packaged event history (Android 1.6 and earlier) to multiple indistinguised touch points (Android 2.0) to the potential for handling multiple touch points that are kept distinct even if lower-indexed touchpoints are raised (and thus each point has an index and generates its own indexed touch-up/touch-down event).It filters out "event noise" on Synaptics touch screens (G1, MyTouch, Nexus One) - for example, when you have two touch points down and lift just one finger, each of the ordinates X and Y can be lifted in separate touch events, meaning you get a spurious motion event (or several events) consisting of a sudden fast snap of the touch point to the other axis before the correct single touch event is generated.

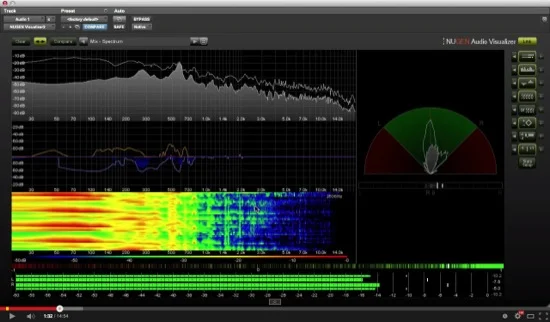

This MultiTouch Controller class makes it much easier to write multitouch applications for Android: MTPhotoSortr, a demo app showing how to use the MultiTouch Controller class.MTVisualizer, the source code for the app "MultiTouch Visualizer 2" on Google Play.MTController, the MultiTouch Controller class for Android (see below).This project currently comprises three Android sub-projects: Welcome to the android-multitouch-controller project.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed